Ethics is the New Code Quality

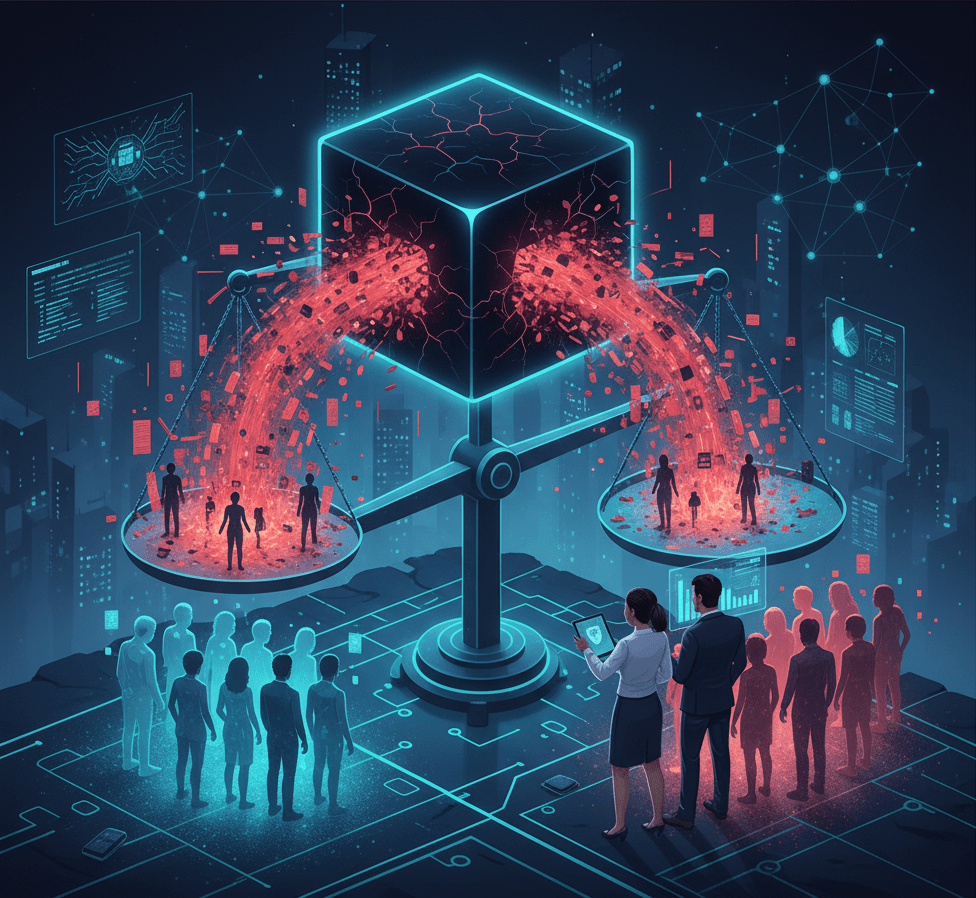

When an algorithm designed to approve loan applications discriminates against a specific zip code, the technical answer is “bad data.” The product answer is “unmanaged risk.” In the age of AI, ethics is no longer a philosophical debate; it is a P0 product bug. It is the single fastest way to destroy trust, invite regulatory scrutiny, and sink a promising product.

The Three Blind Spots of AI Bias

The bias we fear is rarely intentional malice; it’s usually one of three insidious blind spots that Product teams must own:

- Data Blind Spot (The Past is Not the Future): Your training data reflects historical human decisions—and historical human bias. If your product is trained on 10 years of hiring data that favored one gender, the AI will simply automate and scale that unfairness. A great model trained on bad data is just a highly efficient amplifier of bias.

- Edge Case Blind Spot (The Black Box): Many powerful machine learning models are “black boxes,” meaning they produce results without clearly showing why. When a decision impacts a user’s life (e.g., healthcare, finance), this lack of explainability is a massive trust blocker and a regulatory liability. Your users, and regulators, will demand to see the inner workings.

- Impact Blind Spot (Unintended Consequences): A recommender system that boosts engagement is good for a metric, but what if it also fosters polarization? Product Managers must conduct an Ethical Risk Assessment to map every potential negative externality before launch, anticipating social harm, not just technical errors.

The PM as AI Ethics Officer

To own the bias, the Product Manager must expand their toolkit. PM need to formalize AI Governance by:

- Mandating Explainable AI (XAI): Prioritize models where the “why” is visible, even if it sacrifices a few percentage points of accuracy.

- Implementing Continuous Model Monitoring: Bias is not a one-time fix. Models drift. You need dashboards that track for disparate impact across user segments long after launch.

- Creating a Responsible AI Framework: Embed clear policies that dictate what data is acceptable, how edge cases are reviewed, and who has the final veto on model deployment.

Leave a comment