You can’t outspend a global structural shortage, so you have to out-think it. In 2026, the “AI PC” was supposed to be our savior—moving compute away from expensive clouds and onto our desks. But there’s a paradox: the hardware required to run AI locally is the very hardware that’s becoming prohibitively expensive.

The “AI PC” Paradox

To run a useful LLM locally, 16GB of RAM is no longer the “recommended” spec; it’s the bare minimum. 32GB is the new standard. As we saw in Part 1, the cost of these components is skyrocketing.

We are entering an era where the “Replacement Cycle” is dead. If you’re waiting for prices to “normalize” before refreshing your fleet, you might be waiting until 2028. By then, your competition will have already optimized around the shortage.

Three Pillars of the 2026 Strategy

- Inventory as a Financial Hedge: In a “Supercycle,” taking a bold inventory position isn’t just about supply—it’s a hedge against inflation. For those in the Stock & Sell motion, the “right” inventory is now more valuable than cash. If you have the hardware on the shelf, you own the market.

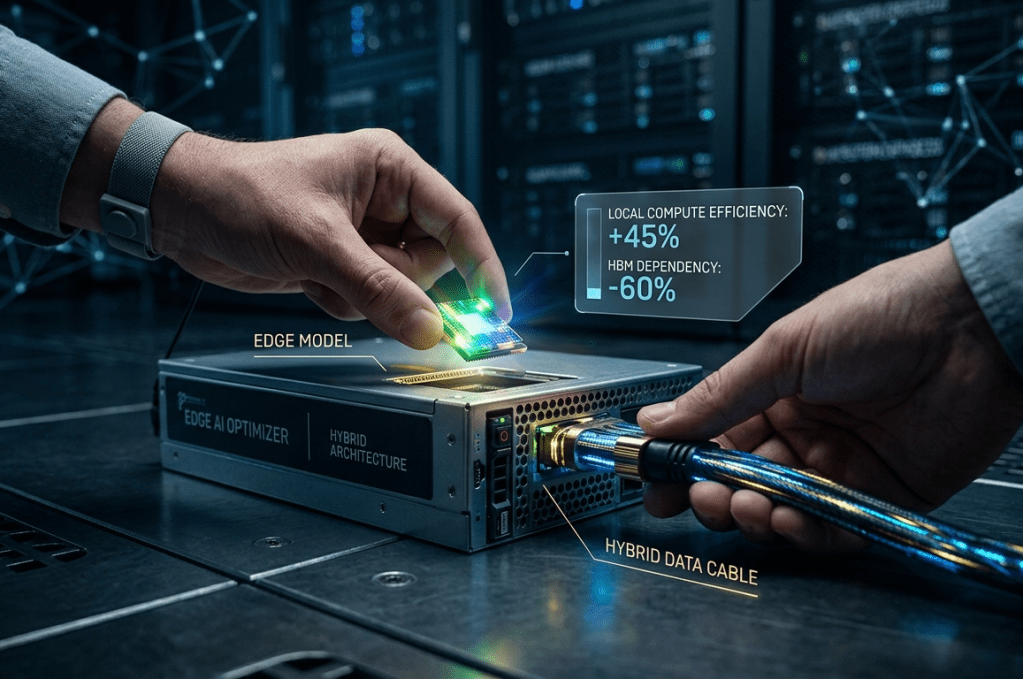

- The Shift to Edge & Hybrid AI: Instead of “brute-forcing” large models on every machine, winners are moving toward Edge AI. This means using smaller, hyper-optimized models that can run on existing hardware or specialized NPUs (Neural Processing Units), reducing the dependency on massive RAM/SSD clusters.

- Lifecycle Extension & Maintenance: If you can’t buy new, you must maintain. We are seeing a massive resurgence in component-level upgrades. Instead of replacing a $1,500 laptop, enterprises are spending $300 to max out the RAM and SSD—extending the ROI of their 2023/2024 investments.

The Conclusion

The winners of 2026 won’t be the companies with the most AI; they’ll be the ones who managed their hardware runway most effectively. The “Hardware Tax” is real, but it’s also a filter. It separates the companies that are just “chasing the hype” from the ones building a sustainable, hardware-aware AI future.

Leave a comment